In Sleep Deprivation, Attention Lapses Correspond with CSF Cleaning Flushes

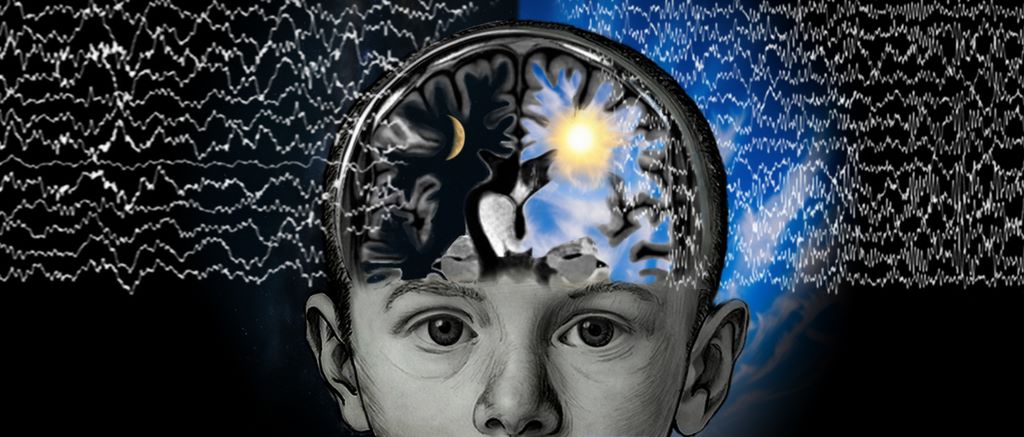

New research shows attention lapses due to sleep deprivation coincide with a flushing of fluid from the brain — a process that normally occurs during sleep.

Anne Trafton | MIT News

Nearly everyone has experienced it: After a night of poor sleep, you don’t feel as alert as you should. Your brain might seem foggy, and your mind drifts off when you should be paying attention.

A new study from MIT reveals what happens inside the brain as these momentary failures of attention occur. The scientists found that during these lapses, a wave of cerebrospinal fluid (CSF) flows out of the brain – a process that typically occurs during sleep and helps to wash away waste products that have built up during the day. This flushing is believed to be necessary for maintaining a healthy, normally functioning brain.

When a person is sleep-deprived, it appears that their body attempts to catch up on this cleansing process by initiating pulses of CSF flow. However, this comes at a cost of dramatically impaired attention.

“If you don’t sleep, the CSF waves start to intrude into wakefulness where normally you wouldn’t see them. However, they come with an attentional tradeoff, where attention fails during the moments that you have this wave of fluid flow,” says Laura Lewis, the Athinoula A. Martinos Associate Professor of Electrical Engineering and Computer Science, a member of MIT’s Institute for Medical Engineering and Science and the Research Laboratory of Electronics, and an associate member of the Picower Institute for Learning and Memory.

Lewis is the senior author of the study, which appears today in Nature Neuroscience. MIT visiting graduate student Zinong Yang is the lead author of the paper.

Flushing the brain

Although sleep is a critical biological process, it’s not known exactly why it is so important. It appears to be essential for maintaining alertness, and it has been well-documented that sleep deprivation leads to impairments of attention and other cognitive functions.

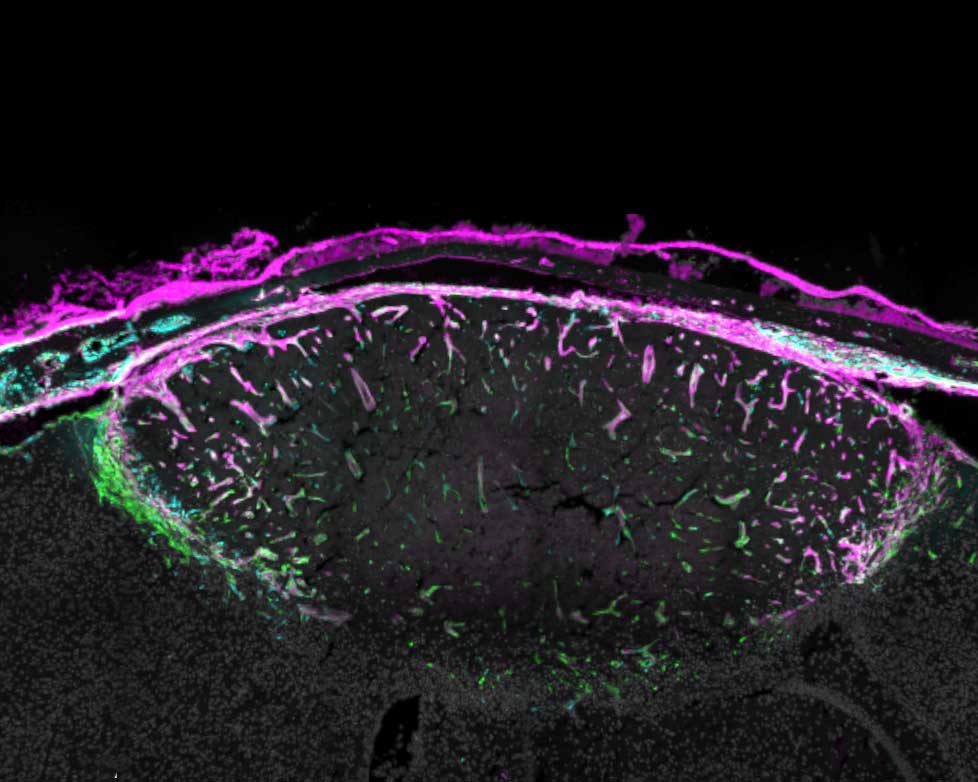

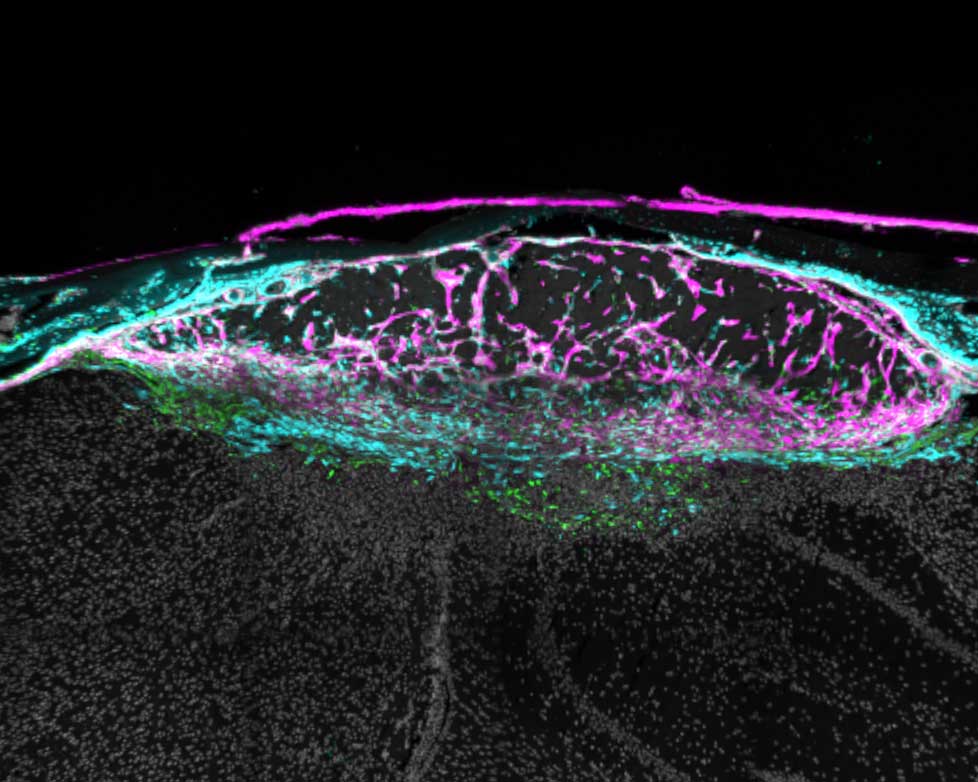

During sleep, the cerebrospinal fluid that cushions the brain helps to remove waste that has built up during the day. In a 2019 study, Lewis and colleagues showed that CSF flow during sleep follows a rhythmic pattern in and out of the brain, and that these flows are linked to changes in brain waves during sleep.

That finding led Lewis to wonder what might happen to CSF flow after sleep deprivation. To explore that question, she and her colleagues recruited 26 volunteers who were tested twice — once following a night of sleep deprivation in the lab, and once when they were well-rested.

In the morning, the researchers monitored several different measures of brain and body function as the participants performed a task that is commonly used to evaluate the effects of sleep deprivation.

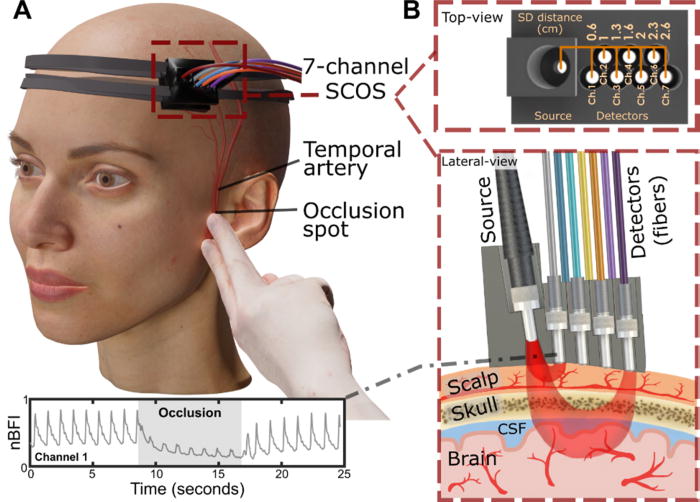

During the task, each participant wore an electroencephalogram (EEG) cap that could record brain waves while they were also in a functional magnetic resonance imaging (fMRI) scanner. The researchers used a modified version of fMRI that allowed them to measure not only blood oxygenation in the brain, but also the flow of CSF in and out of the brain. They also measured each subject’s heart rate, breathing rate, and pupil diameter.

The participants performed two attentional tasks while in the fMRI scanner, one visual and one auditory. For the visual task, they had to look at a screen that had a fixed cross. At random intervals, the cross would turn into a square, and the participants were told to press a button whenever they saw this happen. For the auditory task, they would hear a beep instead of seeing a visual transformation.

Sleep-deprived participants performed much worse than well-rested participants on these tasks, as expected. Their response times were slower, and for some of the stimuli, the participants never registered the change at all.

During these momentary lapses of attention, the researchers identified several physiological changes that occurred at the same time. Most significantly, they found a flux of CSF out of the brain just as those lapses occurred. After each lapse, CSF flowed back into the brain.

“The results are suggesting that at the moment that attention fails, this fluid is actually being expelled outward away from the brain. And when attention recovers, it’s drawn back in,” Lewis says.

The researchers hypothesise that when the brain is sleep-deprived, it begins to compensate for the loss of the cleansing that normally occurs during sleep, even though these pulses of CSF flow come with the cost of attention loss.

“One way to think about those events is because your brain is so in need of sleep, it tries its best to enter into a sleep-like state to restore some cognitive functions,” Yang says. “Your brain’s fluid system is trying to restore function by pushing the brain to iterate between high-attention and high-flow states.”

A unified circuit

The researchers also found several other physiological events linked to attentional lapses, including decreases in breathing and heart rate, along with constriction of the pupils. They found that pupil constriction began about 12 seconds before CSF flowed out of the brain, and pupils dilated again after the attentional lapse.

“What’s interesting is it seems like this isn’t just a phenomenon in the brain, it’s also a body-wide event. It suggests that there’s a tight coordination of these systems, where when your attention fails, you might feel it perceptually and psychologically, but it’s also reflecting an event that’s happening throughout the brain and body,” Lewis says.

This close linkage between disparate events may indicate that there is a single circuit that controls both attention and bodily functions such as fluid flow, heart rate, and arousal, according to the researchers.

“These results suggest to us that there’s a unified circuit that’s governing both what we think of as very high-level functions of the brain — our attention, our ability to perceive and respond to the world — and then also really basic fundamental physiological processes like fluid dynamics of the brain, brain-wide blood flow, and blood vessel constriction,” Lewis says.

In this study, the researchers did not explore what circuit might be controlling this switching, but one good candidate, they say, is the noradrenergic system. Recent research has shown that this system, which regulates many cognitive and bodily functions through the neurotransmitter norepinephrine, oscillates during normal sleep.

The research was funded by the National Institutes of Health, a National Defense Science and Engineering Graduate Research Fellowship, a NAWA Fellowship, a McKnight Scholar Award, a Sloan Fellowship, a Pew Biomedical Scholar Award, a One Mind Rising Star Award, and the Simons Collaboration on Plasticity in the Aging Brain.

This story is republished courtesy of MIT News (web.mit.edu/newsoffice/), a popular site that covers news about MIT research, innovation and teaching.

Source: MIT News