Flawed Data on Key SARS-CoV-2 Enzyme Trips up Research

The COVID pandemic illustrated how urgently we need antiviral medications capable of treating coronavirus infections. To aid this effort, researchers quickly homed in on part of SARS-Cov-2’s molecular structure known as the NiRAN domain – an enzyme region essential to viral replication that’s common to many coronaviruses. A drug targeting the NiRAN domain would likely work broadly to shut down a range of these pathogens, potentially treating known diseases like COVID as well as helping to head off future pandemics caused by related viruses.

In 2022, scientists (Yan et. al.) published a structural model describing exactly how this domain works. It should have been a tremendous boon for drug developers.

But the model was wrong.

“Their work contains critical errors,” says Gabriel Small, a graduate fellow in the laboratories of Seth A. Darst and Elizabeth Campbell at Rockefeller. “The data does not support their conclusions.”

Now, in a new study published in Cell, Small and colleagues demonstrate exactly why scientists still don’t know how the NiRAN domain works. The findings could have sweeping implications for drug developers already working to design antivirals based on flawed assumptions, and underscore the importance of rigorous validation.

“It is absolutely important that structures be accurate for medicinal chemistry, especially when we’re talking about a critical target for antivirals that is the subject of such intense interest in industry,” says Campbell, head of the Laboratory of Molecular Pathogenesis. “We hope that our work will prevent developers from futilely trying to optimise a drug around an incorrect structure.”

A promising lead

By the time the original paper was published in Cell, the Campbell and Darst labs were already quite familiar with the NiRAN domain and its importance as a therapeutic target. Both laboratories study gene expression in pathogens, and their work on SARS-CoV-2 focuses in part on characterizing the molecular interactions that coordinate viral replication.

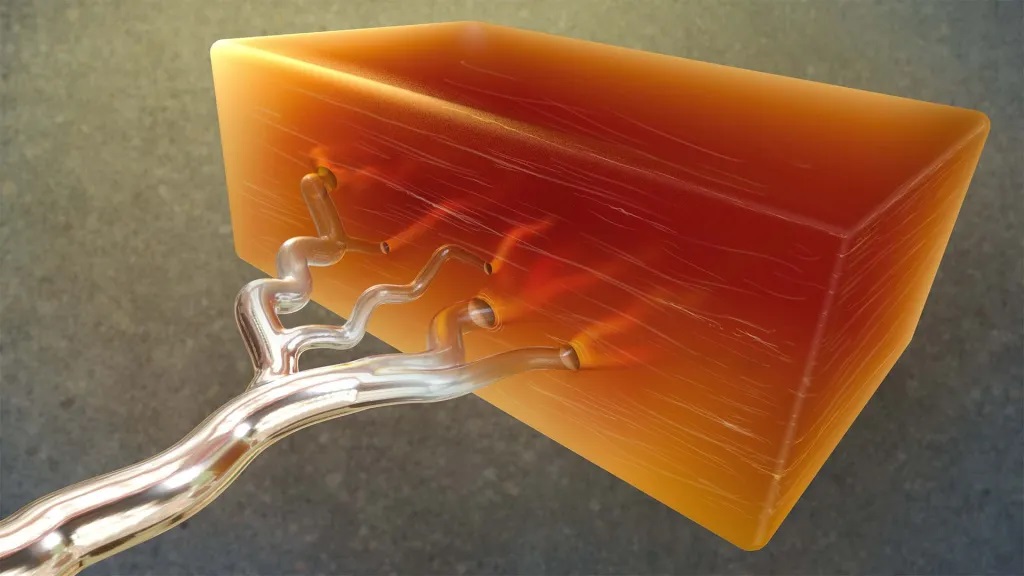

The NiRAN domain is essential for helping SARS-CoV-2 and other coronaviruses cap their RNA, a step that allows these viruses to replicate and survive. In one version of this process, the NiRAN domain uses a molecule called GDP to attach a protective cap to the beginning of the virus’s RNA. Small previously described that process in detail, and its structure is considered solved. But the NiRAN domain can also use a related molecule, GTP, to form a protective cap. Determined to develop antivirals that comprehensively shut down the NiRAN domain, scientists were keen to discover the particulars of the latter GTP-related mechanism.

In the 2022 paper, researchers described a chain of chemical steps, beginning with a water molecule breaking a bond to release the RNA’s 5′ phosphate end. That end then attaches to the beta-phosphate end of the GTP molecule, which removes another phosphate and, with the help of a magnesium ion, transfers the remaining portion of the GTP molecule to the RNA, forming a protective cap that allows the virus to replicate and thrive.

The team’s evidence? A cryo-electron microscopy image that showed the process caught in action. To freeze this catalytic intermediate, the team used a GTP mimic called GMPPNP.

Small read the paper with interest. “As soon as they published, I went to download their data,” he says. It wasn’t there. This raised a red flag—data is generally available upon release of a structural biology paper. Months later, however, when Small was finally able to access the data, he began to uncover significant flaws. “I tried to make a figure using their data, and realized that there were serious issues,” he says. Small brought his concerns to Campbell and Darst.

They agreed. “Something was clearly wrong,” Campbell says. “But we decided to give the other team the benefit of the doubt, and reprocess all of their data ourselves.”

An uphill battle

It was painstaking work, with Small leading the charge. Working frame by frame, he compared the published atomic model to the actual cryo-EM map and found something striking: the key molecules that Yan and colleagues claimed to have seen, specifically, the GTP mimic GMPPNP and a magnesium ion in the NiRAN domain’s active site, simply were not there.

Not only was there no supporting image data, but the placement of these molecules in the original model also violated basic rules of chemistry, causing severe atomic clashes and unrealistic charge interactions. Small ran additional tests, but even advanced methods designed to pick out rare particles turned up empty. He could find no evidence to support the model previously produced by Yan and colleagues.

Once the Rockefeller researchers validated their results, they submitted their findings to Cell. “It was very important that we publish our corrective manuscript in the same journal that published the original model,” Campbell says, noting that corrections to high-profile papers are often overlooked when published in lower tier journals.

Otherwise, this confusion in the field could cause problems that reach far beyond the lab bench, Campbell adds – a costly reminder that rigorous basic biomedical research is not just academic, but essential to real-world progress. “Companies keep their cards close to their chests, but we know that several industry groups are studying this,” she says. “Efforts based on a flawed structural model could result in years of wasted time and resources.”

Source: The Rockerfeller University