Don’t Blame the Iodine For Reactions to Contrast Scans

Patients may often be worried about reactions to the contrast agents used in their scans.

What is a contrast scan?

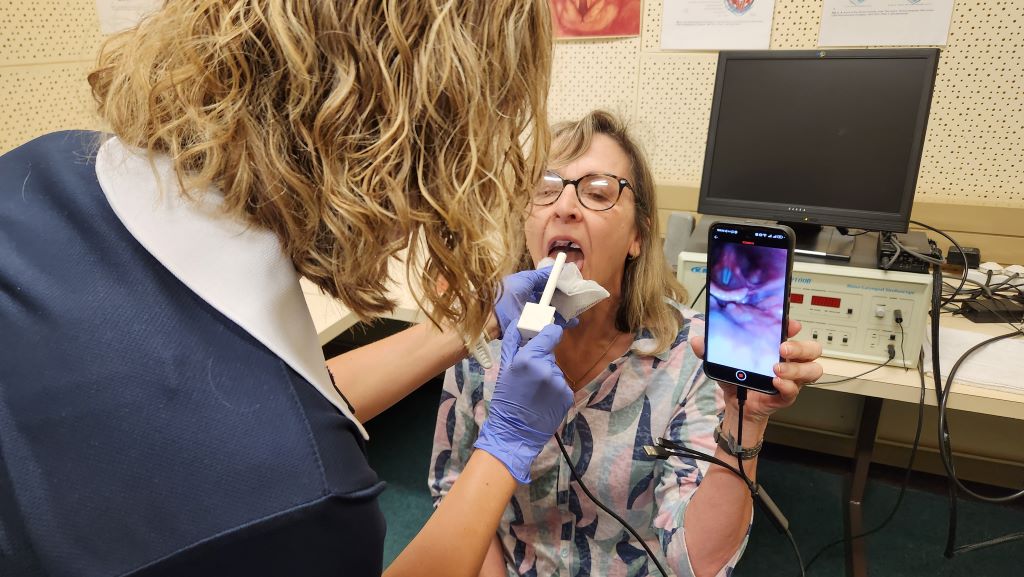

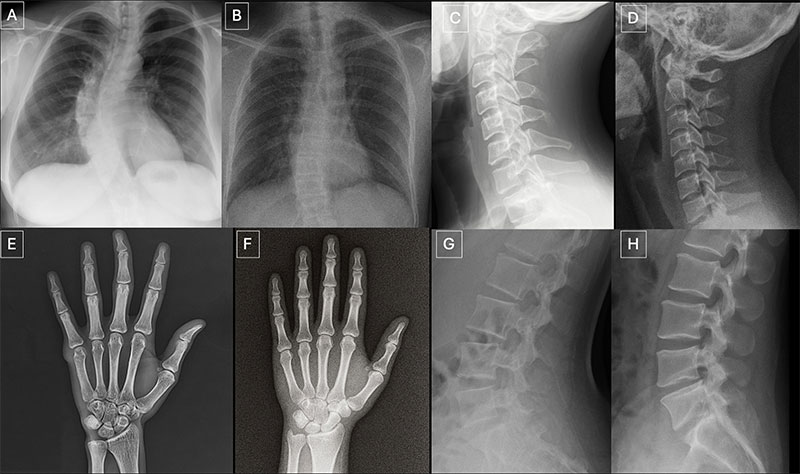

‘A contrast scan is a medical imaging test, such as a CT scan or MRI,’ says Dr Jean de Villiers, a radiologist and director of SCP Radiology, ‘that uses a special dye called a ‘contrast agent’ to make certain areas of the body easier to see. The contrast helps highlight blood vessels, organs or abnormal tissues, providing clearer and more detailed images. Dr de Villiers talks about the dye, what it is used for and debunks the myth that it is the iodine that causes allergic reactions in some people.

For MRI scans, a different type of contrast is used, which is gadolinium-based and, while allergic reactions are possible, they are extremely rare.

Why is it used?

The contrast agent shows the blood flow through arteries and veins, blockages, bleeding or abnormal growths and detailed organ structure (such as the brain, liver or kidneys).

In short, contrast helps to highlight differences between normal and abnormal tissue, improving diagnosis and treatment planning.

How is the dye administered?

The contrast agent is usually injected into a vein but, in some cases it can be swallowed or given as a rectal enema, depending on the area being examined. It temporarily changes the way radiation or magnetic fields interact with the body’s internal structures.

Is there an iodine allergy risk in a contrast scan?

This is a common concern, but it’s a bit misunderstood.

People often believe they are allergic to iodine because they may have reacted to contrast dye in the past or to shellfish, which contain iodine. However, iodine itself is not an allergen. According to radiologists and allergists, the body doesn’t mount an allergic immune response to iodine as it’s a basic element, essential to human health, particularly for thyroid function.

What causes allergic reactions in contrast scans?

The culprits are usually one of the other compounds, not iodine. Most contrast agents used in CT scans are iodinated contrast agents however, reactions tend to be linked to the chemical structure of the compound, not its iodine content.

Reactions may range from mild (nausea, itching, flushing) to more serious (difficulty breathing or anaphylactoid reactions), which mimic allergies but do not involve the immune system in the same way.

These reactions are typically caused by:

- Concentration of the contrast agent

- Molecular structure (ionic vs non-ionic)

- Patient-specific factors such as history of allergies, asthma or previous reaction to contrast

Advancements in the type of contrast agent used have significantly reduced the rate of reactions in patients.

To confirm: It’s not the iodine, it’s the other compounds attached to the iodine in the dye and the body’s unique response to them. That is why patients are always asked about any previous contrast reactions, asthma or other allergies before being given the contrast injection.

‘Whether you are asked or not,’ says Dr de Villiers, it’s always best to inform the radiology team if you have had any previous allergic contrast reactions.’