Babies are Born with a Sense of Rhythm, Study Suggests

Newborns listening to Bach music predicted rhythm, but not melody, according to their brain waves

Babies are born with the ability to predict rhythm, according to a study published February 5th in the open-access journal PLOS Biology by Roberta Bianco from the Italian Institute of Technology, and colleagues.

It’s anticipating a beat drop, key change or chorus in a song you’ve never heard. Across all cultures, humans can inherently anticipate rhythm and melody. But are babies born with these behaviours, or are they learned? Research shows that by approximately 35 weeks of gestation, foetuses begin to respond to music with changes in heart rate and body movements. However, newborns’ ability to anticipate rhythm and melody is not fully understood.

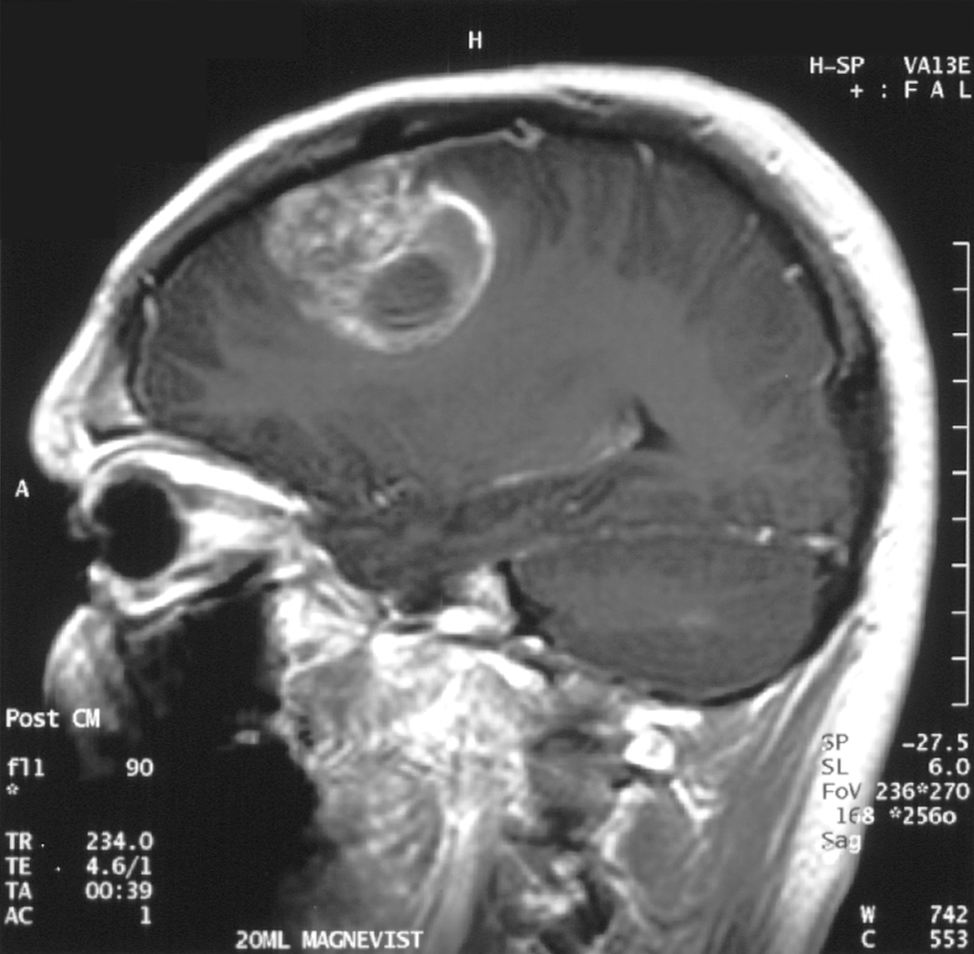

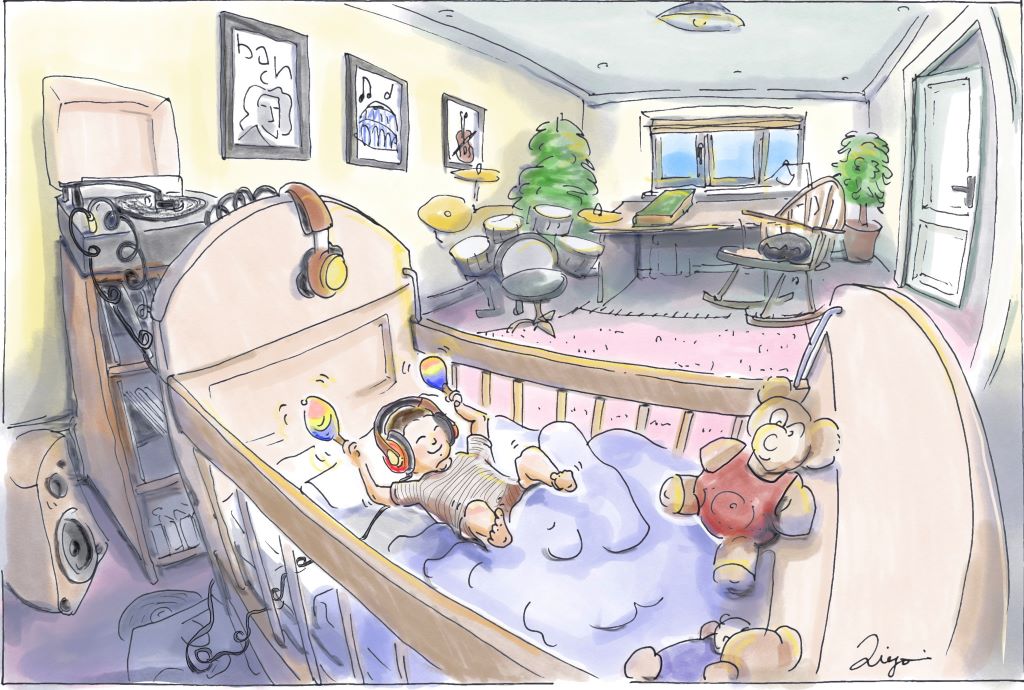

To understand babies’ musical aptitudes, researchers played J.S. Bach’s piano compositions for an audience of 49 sleeping newborns. Musical stylings included 10 original melodies and four shuffled songs with scrambled melodies and pitches. While the babies listened, the researchers used electroencephalography – electrodes placed on the babies’ heads – to measure their brainwaves. When the babies’ brain waves showed signs of surprise, it meant they expected the song to go one way, but it went another.

The newborns tended to show neural signs of surprise when the rhythm unexpectedly changed; in other words, the miniature maestros had generated musical expectations based on rhythm. Previously, this result had been observed in non-human primates. The researchers found no evidence that the newborns tracked melody or were surprised by unexpected melodic changes, a skill that comes at an unknown exact point later in development.

According to the authors, understanding how humans become aware of rhythm can help biologists understand how our auditory systems develop. Future studies can investigate how exposure to music during gestation affects acquisition of rhythm and melody.

The authors add, “Are newborns ready for Bach? Newborns come into the world already tuned in to rhythm. Our latest research shows that even our tiniest 2-day old listeners can anticipate rhythmic patterns, revealing that some key elements of musical perception are wired from birth. But there’s a twist: melodic expectations – our ability to predict the flow of a tune – don’t seem to be present yet. This suggests that melody isn’t innate but gradually learned through exposure. In other words, rhythm may be part of our biological toolkit, while melody is something we grow into.”

Provided by PLOS