Bacteria Subtype Linked to Growth in up to 50% of Human Colorectal Cancers

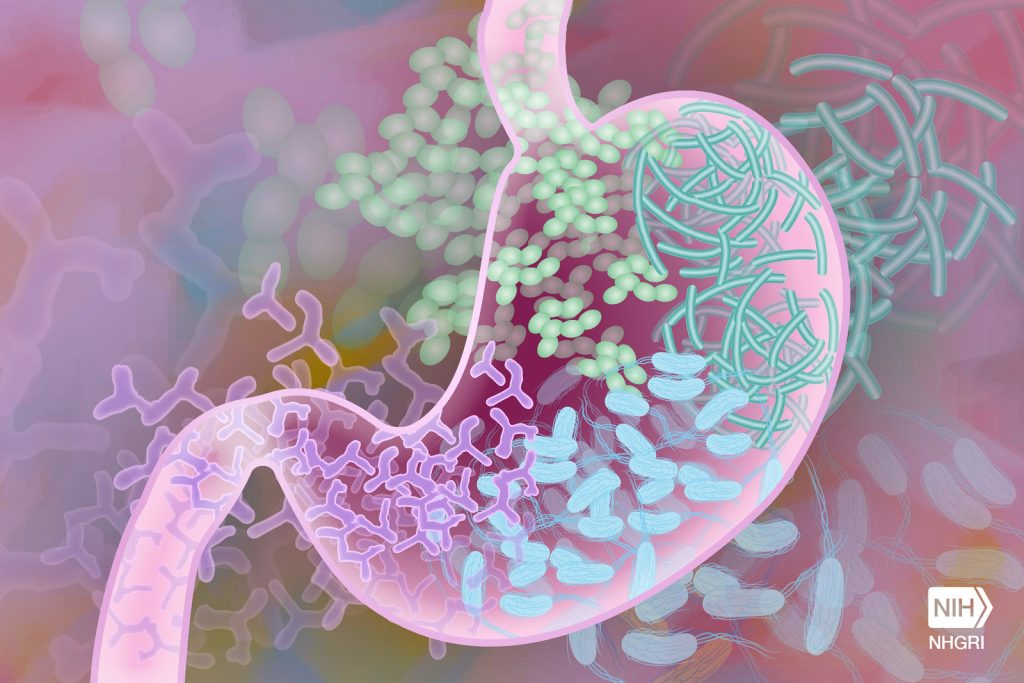

Researchers at Fred Hutchinson Cancer Center have found that a specific subtype of a microbe commonly found in the mouth is able to travel to the gut and grow within colorectal cancer tumours. This microbe is also a culprit for driving cancer progression and leads to poorer patient outcomes after cancer treatment.

The findings, published in Nature, could help improve therapeutic approaches and early screening methods for colorectal cancer, which is the second most common cause of cancer deaths in adults in the U.S. according to the American Cancer Society.

Examining colorectal cancer tumours removed from 200 patients, the Fred Hutch team measured levels of Fusobacterium nucleatum, a bacterium known to infect tumours. In about 50% of the cases, they found that only a specific subtype of the bacterium was elevated in the tumour tissue compared to healthy tissue.

The researchers also found this microbe in higher numbers within stool samples of colorectal cancer patients compared with stool samples from healthy people.

“We’ve consistently seen that patients with colorectal tumours containing Fusobacterium nucleatum have poor survival and poorer prognosis compared with patients without the microbe,” explained Susan Bullman, PhD, Fred Hutch cancer microbiome researcher and co-corresponding study author. “Now we’re finding that a specific subtype of this microbe is responsible for tumour growth. It suggests therapeutics and screening that target this subgroup within the microbiota would help people who are at a higher risk for more aggressive colorectal cancer.”

In the study, Bullman and co-corresponding author Christopher D. Johnston, PhD, Fred Hutch molecular microbiologist, along with the study’s first author Martha Zepeda-Rivera, PhD, a Washington Research Foundation Fellow and Staff Scientist in the Johnston Lab, wanted to discover how the microbe moves from its typical environment of the mouth to a distant site in the lower gut and how it contributes to cancer growth.

First they found a surprise that could be important for future treatments. The predominant group of Fusobacterium nucleatum in colorectal cancer tumours, thought to be a single subspecies, is actually composed of two distinct lineages known as “clades.”

“This discovery was similar to stumbling upon the Rosetta Stone in terms of genetics,” Johnston explained. “We have bacterial strains that are so phylogenetically close that we thought of them as the same thing, but now we see an enormous difference between their relative abundance in tumours versus the oral cavity.”

By separating out the genetic differences between these clades, the researchers found that the tumour-infiltrating Fna C2 type had acquired distinct genetic traits suggesting it could travel from the mouth through the stomach, withstand stomach acid and then grow in the lower gastrointestinal tract. The analysis revealed 195 genetic differences between the clades.

Then, comparing tumour tissue with healthy tissue from patients with colorectal cancer, the researchers found that only the subtype Fna C2 is significantly enriched in colorectal tumour tissue and is responsible for colorectal cancer growth.

Further molecular analyses of two patient cohorts, including over 200 colorectal tumours, revealed the presence of this Fna C2 lineage in approximately 50% of cases.

The researchers also found in hundreds of stool samples from people with and without colorectal cancer that Fna C2 levels were consistently higher in colorectal cancer.

“We have pinpointed the exact bacterial lineage that is associated with colorectal cancer, and that knowledge is critical for developing effective preventive and treatment methods,” Johnston said.

Source: Fred Hutchinson Cancer Center