Neuroscientists Regenerate Neurons in Mice with Spinal Cord Injury

In a new study using mice, neuroscientists have uncovered a crucial component for restoring functional activity after spinal cord injury. In the study, published in Science, the researchers showed that re-growing specific neurons back to their natural target regions led to recovery, while random regrowth was not effective.

In a 2018 study in Nature, the team identified a treatment approach that triggers axons to regrow after spinal cord injury in rodents. But even as that approach successfully led to the regeneration of axons across severe spinal cord lesions, achieving functional recovery remained a significant challenge.

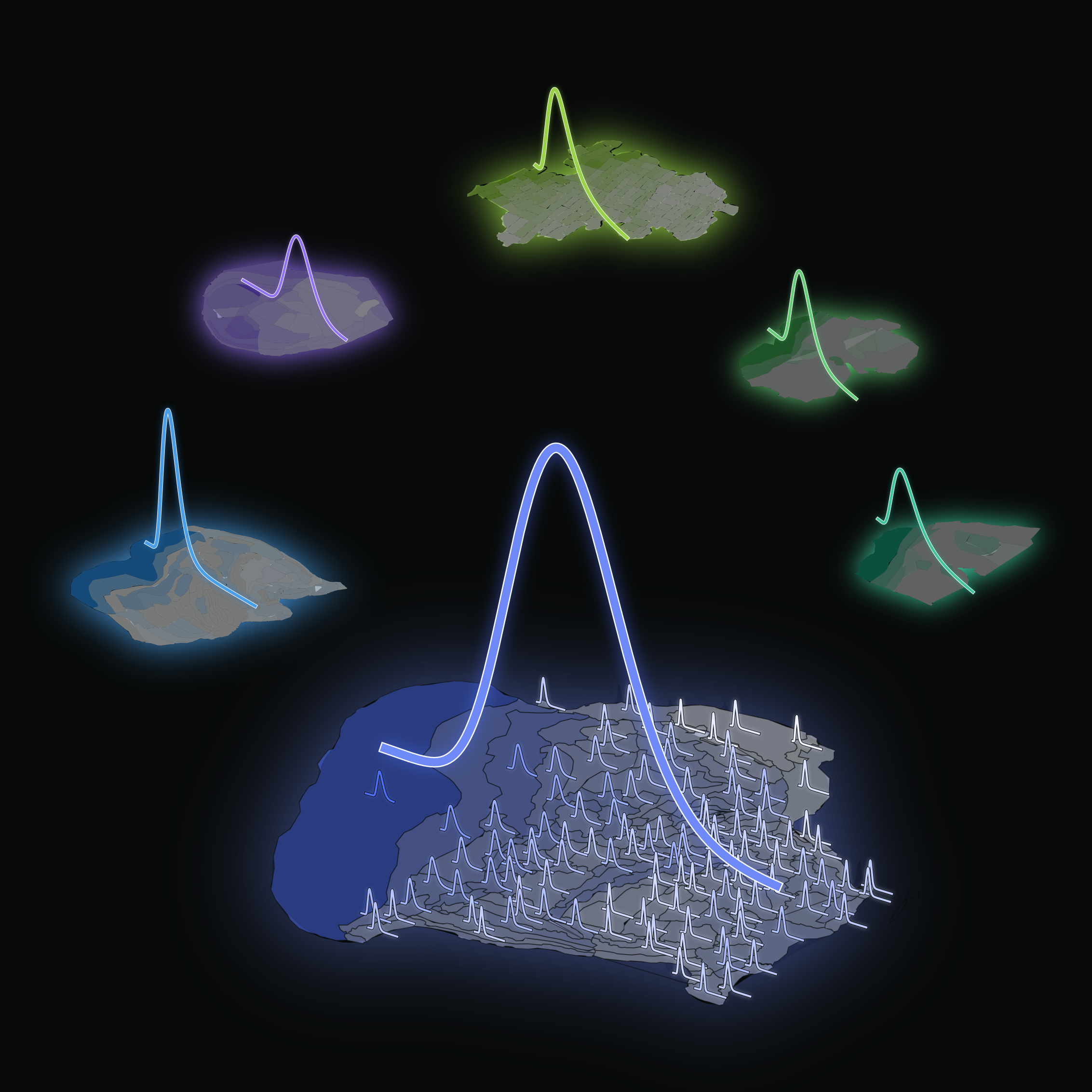

For the new study, the team of researchers from UCLA, the Swiss Federal Institute of Technology, and Harvard University aimed to determine whether directing the regeneration of axons from specific neuronal subpopulations to their natural target regions could lead to meaningful functional restoration after spinal cord injury in mice. They first used advanced genetic analysis to identify nerve cell groups that enable walking improvement after a partial spinal cord injury.

The researchers then found that merely regenerating axons from these nerve cells across the spinal cord lesion without specific guidance had no impact on functional recovery. However, when the strategy was refined to include using chemical signals to attract and guide the regeneration of these axons to their natural target region in the lumbar spinal cord, significant improvements in walking ability were observed in a mouse model of complete spinal cord injury.

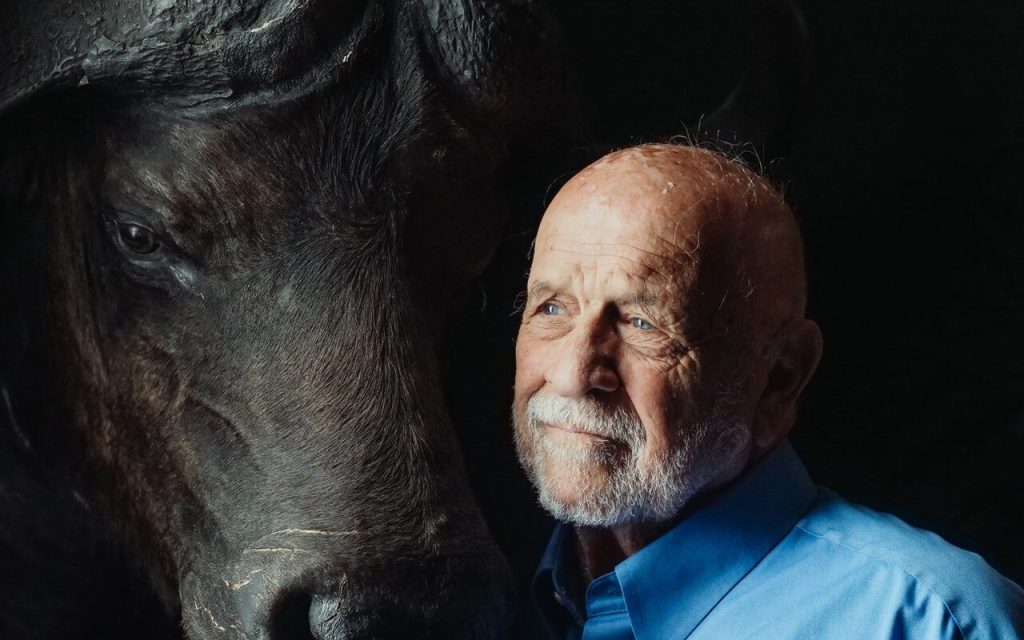

“Our study provides crucial insights into the intricacies of axon regeneration and requirements for functional recovery after spinal cord injuries,” said Michael Sofroniew, MD, PhD, professor of neurobiology at the David Geffen School of Medicine at UCLA and a senior author of the new study. “It highlights the necessity of not only regenerating axons across lesions but also of actively guiding them to reach their natural target regions to achieve meaningful neurological restoration.”

The authors say understanding that re-establishing the projections of specific neuronal subpopulations to their natural target regions holds significant promise for the development of therapies aimed at restoring neurological functions in larger animals and humans. However, the researchers also acknowledge the complexity of promoting regeneration over longer distances in non-rodents, necessitating strategies with intricate spatial and temporal features. Still, they conclude that applying the principles laid out in their work “will unlock the framework to achieve meaningful repair of the injured spinal cord and may expedite repair after other forms of central nervous system injury and disease.”

Source: University of California – Los Angeles Health Sciences