Unexpected Discovery Opens Up Stroke and Cardiac Arrest Treatments

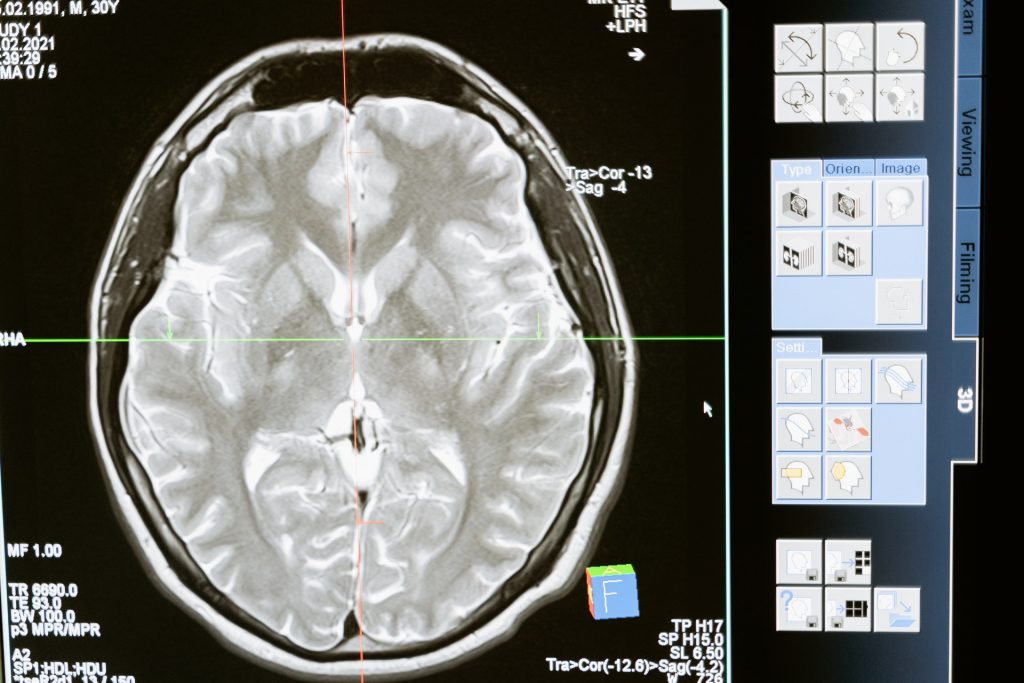

In a surprising discovery, researchers at Massachusetts General Hospital (MGH) identified a mechanism that protects the brain from the effects of hypoxia. This serendipitous finding, which they report in Nature Communications, could help develop therapies for strokes, as well as brain injury resulting from cardiac arrest.

However, this study began with a very different objective, explained senior author Fumito Ichinose, MD, PhD, an attending physician in the Department of Anesthesia, Critical Care and Pain Medicine at MGH, and principal investigator in the Anesthesia Center for Critical Care Research. Ichinose and his team are developing techniques for inducing suspended animation, a state similar to hibernation where a human’s body functions are temporarily slowed or halted for later revival.

Ichinose believes that the ability to safely induce suspended animation could have valuable medical applications, such as pausing the life processes of a patient with an incurable disease until an effective therapy is found. Often seen in science fiction, and currently studied by NASA, it could also allow humans to travel long distances in space.

A 2005 study found that inhaling a gas called hydrogen sulfide caused mice to enter a state of suspended animation. Hydrogen sulfide, which smells like rotten eggs, is sometimes called ‘sewer gas.’ Oxygen deprivation in a mammal’s brain leads to increased production of hydrogen sulfide. As this gas accumulates in the tissue, hydrogen sulfide can halt energy metabolism in neurons, causing them to die. Oxygen deprivation is a hallmark of ischaemic stroke, the most common type of stroke, and other injuries to the brain.

At first, Dr Ichinose and his team set out to study the effects of exposing mice to hydrogen sulfide repeatedly, over an extended period. At first, the mice entered a suspended-animation-like state—their body temperatures dropped and they were immobile. “But, to our surprise, the mice very quickly became tolerant to the effects of inhaling hydrogen sulfide,” said Dr Ichinose. “By the fifth day, they acted normally and were no longer affected by hydrogen sulfide.”

Interestingly, the mice that became tolerant to hydrogen sulfide were also able to tolerate severe hypoxia. Ichinose’s group suspected that enzymes in the brain that metabolise sulfide might be responsible for this. They discovered that levels of one particular enzyme, called sulfide:quinone oxidoreductase (SQOR), rose in the brains of mice when they breathed hydrogen sulfide for several days. They thus hypothesised that SQOR plays a role in resistance to hypoxia.

Nature has strong evidence for this; for example, female mammals resist hypoxia better than males—and the former have higher levels of SQOR. When SQOR levels are artificially reduced in females, their hypoxia resistance drops. (Oestrogen may be responsible for the observed increase in SQOR, as the hypoxia protection is lost when a female mammal’s estrogen-producing ovaries are removed.) Additionally, some hibernating animals, such as the thirteen-lined ground squirrel, are highly tolerant of hypoxia, which allows them to survive as their bodies’ metabolism slows down during the winter. The brain of a typical ground squirrel has 100 times more SQOR than that of a similar-sized rat. However, when the researchers ‘switched off’ expression of SQOR in the squirrels’ brains, they lost their protection against the effects of hypoxia.

Meanwhile, when the researchers artificially increased SQOR levels in the brains of mice, “they developed a robust defense against hypoxia,” explained Dr Ichinose. His team increased the level of SQOR using gene therapy, currently a technically complex, impractical approach. On the other hand, the team demonstrated that ‘scavenging’ sulfide, using an experimental drug called SS-20, reduced levels of the gas, thereby sparing the brains of mice when hypoxic.

Human brains have very low levels of SQOR, meaning that even a modest accumulation of hydrogen sulfide can be harmful, said Dr Ichinose. “We hope that someday we’ll have drugs that could work like SQOR in the body,” he says, noting that his lab is studying SS-20 and several other candidates. Such medications could be used to treat ischemic strokes, as well as patients who have suffered cardiac arrest, which can lead to hypoxia. Dr Ichinose’s lab is also investigating how hydrogen sulfide affects other parts of the body. For example, hydrogen sulfide is known to accumulate in other conditions, such as certain types of Leigh syndrome, a rare but severe neurological disorder usually leading to early death. “For some patients,” said Dr Ichinose, “treatment with a sulfide scavenger might be lifesaving.”

Source: Medical Xpress

Journal information: Eizo Marutani et al, Sulfide catabolism ameliorates hypoxic brain injury, Nature Communications (2021). DOI: 10.1038/s41467-021-23363-x