People with ‘Young Brains’ Outlive ‘Old-brained’ Peers, Research Finds

A blood-test analysis developed at Stanford Medicine can determine the “biological ages” of 11 separate organ systems in individuals’ bodies and predict the health consequences.

Beside our chronological age, research has shown that we also have what’s called a “biological age,” a cryptic but more accurate measure of our physiological condition and likelihood of developing aging-associated disorders from heart trouble to Alzheimer’s disease.

How old someone’s internal organs are is a challenge to determine compared to looking at wrinkles and greying hair. Internal organs are ageing at different speeds, too, according to a new study by Stanford Medicine investigators.

“We’ve developed a blood-based indicator of the age of your organs,” said Tony Wyss-Coray, PhD, professor of neurology and neurological sciences and director of the Knight Initiative for Brain Resilience at the Wu Tsai Neurosciences Institute. “With this indicator, we can assess the age of an organ today and predict the odds of your getting a disease associated with that organ 10 years later.”

They can even predict who is most likely to die from medical conditions associated with one or more of the 11 separate organ systems the researchers looked at: brain, muscle, heart, lung, arteries, liver, kidneys, pancreas, immune system, intestine and fat.

The brain is the gatekeeper of longevity. If you’ve got an old brain, you have an increased likelihood of mortality. If you’ve got a young brain, you’re probably going to live longer.”

The biological age of one organ, the brain, plays an outsized role in determining how long you have left to live, Wyss-Coray said.

“The brain is the gatekeeper of longevity,” he said. “If you’ve got an old brain, you have an increased likelihood of mortality. If you’ve got a young brain, you’re probably going to live longer.”

Wyss-Coray is the senior author of the study, published online July 9 in Nature Medicine. The lead author is Hamilton Oh, PhD, a former graduate student in Wyss-Coray’s group.

Eleven organ systems, 3000 proteins, 45 000 people

The scientists used 44 498 randomly selected participants, ages 40 to 70, who were drawn from the UK Biobank. This ongoing effort has collected multiple blood samples and updated medical reports from some 600 000 individuals over several years. These participants were monitored for up to 17 years for changes in their health status.

Wyss-Coray’s team made use of an advanced commercially available laboratory technology that counted the amounts of nearly 3000 proteins in each participant’s blood. Some 15% of these proteins can be traced to single-organ origins, and many of the others to multiple-organ generation.

The researchers fed everybody’s blood-borne protein levels into a computer and determined the average levels of each of those organ-specific proteins in the blood of those people’s bodies, adjusted for age. From this, the scientists generated an algorithm that found how much the composite protein “signature” for each organ being assessed differed from the overall average for people of that age.

Based on the differences between individuals’ and age-adjusted average organ-assigned protein levels, the algorithm assigned a biological age to each of the 11 distinct organs or organ systems assessed for each subject. And it measured how far each organ’s multiprotein signature in any given individual deviated in either direction from the average for people of the same chronological age. These protein signatures served as proxies for individual organs’ relative biological condition. A greater than 1.5 standard deviation from the average put a person’s organ in the “extremely aged” or “extremely youthful” category.

One-third of the individuals in the study had at least one organ with a 1.5-or-greater standard deviation from the average, with the investigators designating any such organ as “extremely aged” or “extremely youthful.” One in four participants had multiple extremely aged or youthful organs.

For the brain, “extremely aged” translated to being among the 6% to 7% of study participants’ brains whose protein signatures fell at one end of the biological-age distribution. “Extremely youthful” brains fell into the 6% to 7% at the opposite end.

Health outcomes foretold

The algorithm also predicted people’s future health, organ by organ, based on their current organs’ biological age. Wyss-Coray and his colleagues checked for associations between extremely aged organs and any of 15 different disorders including Alzheimer’s and Parkinson’s diseases, chronic liver or kidney disease, Type 2 diabetes, two different heart conditions and two different lung diseases, rheumatoid arthritis and osteoarthritis, and more.

Risks for several of those diseases were affected by numerous different organs’ biological age. But the strongest associations were between an individual’s biologically aged organ and the chance that this individual would develop a disease associated with that organ. For example, having an extremely aged heart predicted higher risk of atrial fibrillation or heart failure, having aged lungs predicted heightened chronic obstructive pulmonary disease (COPD) risk, and having an old brain predicted higher risk for Alzheimer’s disease.

The association between having an extremely aged brain and developing Alzheimer’s disease was particularly powerful: 3.1 times that of a person with a normally aging brain. Meanwhile, having an extremely youthful brain was especially protective against Alzheimer’s – barely one-fourth that of a person with a normally aged brain.

In addition, Wyss-Coray said, brain age was the best single predictor of overall mortality. Having an extremely aged brain increased subjects’ risk of dying by 182% over a roughly 15-year period, while individuals with extremely youthful brains had an overall 40% reduction in their risk of dying over the same duration.

Predicting the disease, then preventing it

“This approach could lead to human experiments testing new longevity interventions for their effects on the biological ages of individual organs in individual people,” Wyss-Coray said.

Medical researchers may, for example, be able to use extreme brain age as a proxy for impending Alzheimer’s disease and intervene before the onset of outward symptoms, when there’s still time to arrest it, he said.

Careful collection of lifestyle, diet and prescribed- or supplemental-substance intake in clinical trials, combined with organ-age assessments, could throw light on the medical value of those factors’ contributions to the aging of various organs, as well as on whether existing, approved drugs can restore organ youth before people develop a disease for which an organ’s advanced biological age puts them at high risk, Wyss-Coray added.

If commercialised, the test could be available in the next two to three years, Wyss-Coray said. “The cost will come down as we focus on fewer key organs, such as the brain, heart and immune system, to get more resolution and stronger links to specific diseases.”

Source: Stanford University

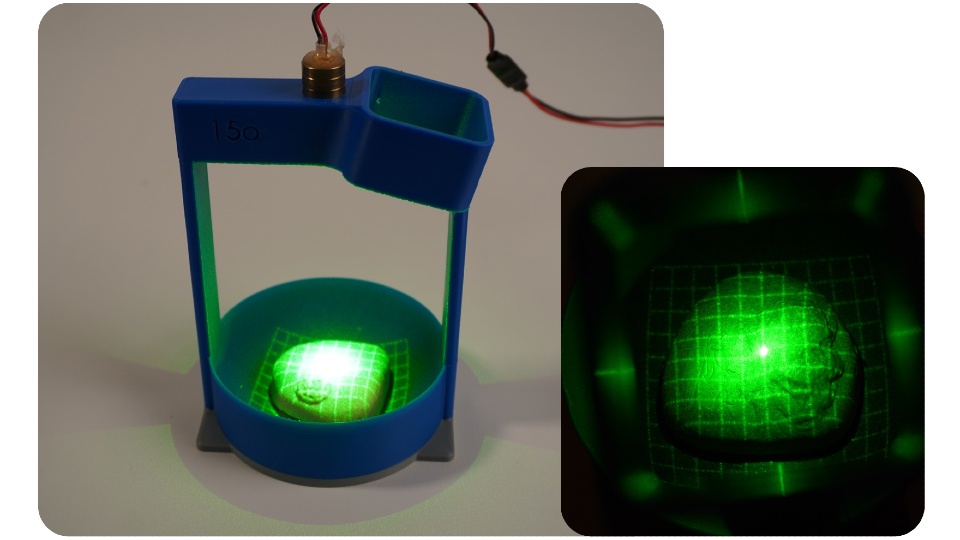

PRO check prototype one demonstrated how laser gridlines and a camera can be used to image the surface of the prostate.

PRO check prototype one demonstrated how laser gridlines and a camera can be used to image the surface of the prostate.